How to Prepare a Machine Learning Interview?, This post serves as a one-stop shop for information on how to prepare for your upcoming machine learning interview.

The top 20 machine learning job interview questions for 2023 will be covered in this article.

We’ll concentrate on real-world scenarios and inquiries that businesses like Microsoft and Amazon frequently use in their hiring processes.

People searching for a fast review of machine learning fundamentals may find this article helpful as well.

How to Prepare a Machine Learning Interview?

We’ve covered a wide range of machine learning interview questions in this article for both novices and seasoned professionals, assuring full preparation for your upcoming ML interview.

How does machine learning work?

A branch of artificial intelligence called machine learning includes creating algorithms and statistical models that let computers learn from experience and become better at completing tasks.

When a computer completes task T, it is said to learn from the experience E and increase its Performance (P).

1: How machine learning is different from general programming?

We have the data and the logic in general programming, and we use these two to produce the solutions.

However, with machine learning, we provide the data and the solutions, then let the computer infer the reasoning from them so that it may apply that logic to resolve future queries.

Additionally, there are situations when it is impossible to express logic in codes; in these cases, machine learning steps in to learn the logic itself.

2: What are some real-life applications of clustering algorithms?

Numerous data science fields, including image classification, customer segmentation, and recommendation engines, can benefit from the clustering technique.

One of its most frequent applications is in market research and customer segmentation, which are then used to focus on a particular market segment to grow enterprises and provide profitable results.

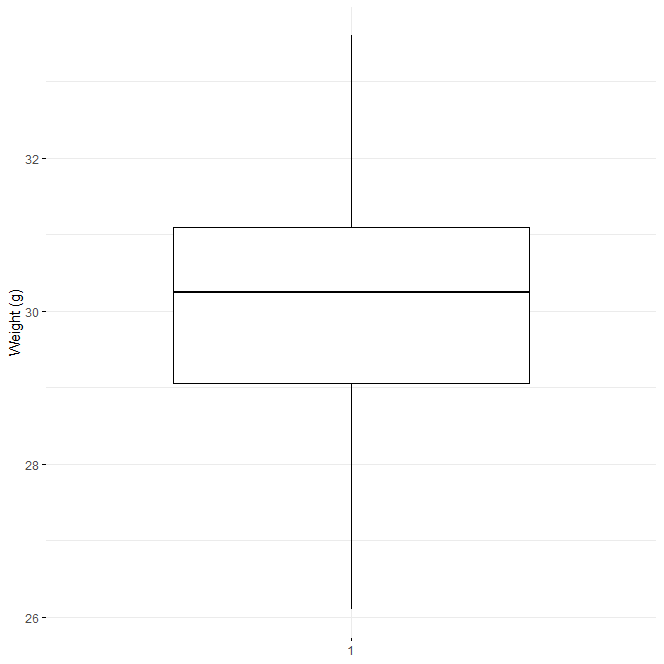

3: How to choose an optimal number of clusters?

We determine the ideal number of clusters that our clustering algorithm should attempt to build by using the Elbow approach.

The fundamental tenet of this approach is that the error value will decrease as the number of clusters rises.

The algorithm will try to build an optimal number of clusters at the point after which this starts to occur because, with an optimal number of features, the drop in the error value is negligible.

4: What is feature engineering? How does it impact the model’s functionality?

Feature engineering is the process of creating new features while utilizing already existing ones.

Sometimes there is a very subtle mathematical relationship between some features that, if carefully investigated, can be used to create additional features.

Additionally, sometimes a single data column will be presented that contains several different bits of information combined.

When this happens, creating new features and utilizing them aids in both gaining deeper insights into the data and, if the features produced are significant enough, greatly enhancing the performance of the model.

5. What Exactly Is a Machine Learning Hypothesis?

The term “hypothesis” is frequently used in the context of supervised machine learning.

When we try to create an approximate function mapping from the feature space to the target variable using independent features and target variables, we call this approximation of a mapping a hypothesis.

6: How can the effectiveness of the clusters be assessed?

Inertia or Sum of Squared Errors (SSE), Silhouette Score, and l1 and l2 scores are a few examples of metrics.

The Inertia or Sum of Squared Errors (SSE) and Silhouette score are two of the measures that are frequently used to gauge how well the clusters perform.

Despite the fact that this method has a high computational cost. If the created clusters are numerous and clearly differentiated, the score is high.

7: Why do we choose smaller learning rate values?

Smaller learning rate values encourage the training process to converge more gradually and steadily toward the global optimum rather than oscillate around it.

This is due to the fact that smaller adjustments to the model weights at each iteration caused by a lower learning rate might make the updates more accurate and stable.

The model weights may update too quickly with an excessive learning rate, which could lead the training process to overshoot the global optimum and completely miss it.

Therefore, it is vital to utilize lower values of the learning rate in order to prevent this oscillation of the error value and obtain the best weights for the model.

8: What is machine learning overfitting and how can it be prevented?

When a model learns patterns as well as the noise in the data, this is known as overfitting, and it results in high performance on training data but very low performance on data that the model has never seen before.

There are several strategies we can employ to prevent overfitting, including:

Early cessation of the model’s training in the event that training doesn’t continue after validation.

utilizing regularization techniques like L1 or L2 regularization, which penalize the weights of the model to prevent overfitting.

9: Why can’t we perform a classification problem using linear regression?

The fundamental reason why we cannot utilize linear regression for a classification problem is that classification needs discrete and finite output values, whereas linear regression produces continuous and unbounded output values.

The error function graph won’t be convex if we choose to perform the classification problem using linear regression.

In a convex graph, there is only one minimum, also known as the global minima, but in a non-convex network, there is a chance that our model will become trapped at a local minimum that isn’t necessarily the global minima.

We avoid using the linear regression approach for a classification assignment in order to prevent this scenario from becoming stuck at the local minima.

10: Why do we normalize data?

We employ normalization techniques to bring all the features to a given scale or range of values in order to ensure stable and quick model training.

There is a chance that the gradient will oscillate back and forth instead than converge to the global or local minima if normalization is not done.

11: What distinguishes recall from precision?

The ratio of true positives (TP) to all positive cases (TP+FP) predicted by the model is the definition of precision.

In other words, precision counts the proportion of true positive examples among the anticipated positive examples.

It is an indicator of how well the model can predict positive outcomes accurately and without producing erroneous results.

The percentage of true positives (TP) and the total number of cases (TP+FN) that genuinely belong in the positive class, however, are calculated in the case of a recall.

Recall indicates the proportion of real positive examples that the model accurately identifies. It is a gauge of the model’s propensity to accurately identify all positive examples while avoiding false negatives.

Data Analysis in R

12: What distinguishes upsampling from downsampling?

By adding a random sample from the minority class to the dataset and repeating the process until the dataset is balanced for each class, the upsampling method increases the number of samples in the minority class.

The model is trained more than once in each epoch, which increases training accuracy, but this has the drawback that the same high accuracy is not shown in validation accuracy.

In the case of downsampling, we choose a random number of points equal to the number of data points in the minority class in order to reduce the number of samples in the majority class and achieve an even distribution.

13: What is data leakage and how can we identify it?

Data leakage occurs when there is a significant correlation between the input attributes and the target variable.

This is because when we train our model with that highly correlated feature, the model only needs to perform a small amount of work to attain high accuracy because it already has the majority of the knowledge about the target variable.

In this instance, the model performs reasonably well on both the training and validation data, but when we utilize that model to actually make predictions, its performance falls short of expectations. This is how data leakage can be found.

14: Describe the metrics in the classification report.

Classification metrics including precision, recall, and f1-score are used to assess classification reports on a per-class basis.

Precision is the capacity of a classifier to avoid classifying an event as positive when it is actually negative.

A classifier’s recall is its capacity to locate all positive values. It is described as the ratio of true positives to the total of true positives and false negatives for each class.

A harmonic mean of recall and precision is the F1-score. The amount of samples utilized for each class is known as support.

A high-level evaluation of the model’s performance can be obtained by looking at its total accuracy score. The ratio of the overall number to

Classification metrics including precision, recall, and f1-score are used to assess classification reports on a per-class basis.

Precision is the capacity of a classifier to avoid classifying an event as positive when it is actually negative.

A classifier’s recall is its capacity to locate all positive values. It is described as the ratio of true positives to the total of true positives and false negatives for each class.

A harmonic mean of recall and precision is the F1-score. The amount of samples utilized for each class is known as support.

A high-level evaluation of the model’s performance can be obtained by looking at its total accuracy score. It is the proportion of all of the accurate predictions to all of the datasets.

The metric values for each class (precision, recall, and f1-score) that make up the macro avg are averaged.

By giving a stronger preference to the class that was present in the datasets in greater numbers, the weighted average is generated.

15: Which of the random forest regressor’s hyperparameters helps prevent overfitting?

A Random Forest’s most crucial hyper-parameters are:

max_depth – Overfitting occasionally results from the tree’s greater depth. The depth needs to be reduced to go around it.

The number of decision trees we want in our forest is indicated by the n-estimator.

The minimal number of samples that an internal node must contain before splitting into other nodes is known as min_sample_split.

max_leaf_nodes – It aids the model in regulating the splitting of the nodes, which in turn limits the depth of the model.

16. What does the bias-variance tradeoff entail?

The gap between the model’s anticipated values and the actual values is referred to as bias.

Low bias indicates that the model has picked up on the pattern in the data, whereas high bias indicates underfitting—the model’s inability to pick up on the patterns in the data.

Variance describes the shift in the model’s accuracy of a prediction made on data on which the model has not been trained.

High variation indicates that the performance of the training data and the validation data differ significantly, while low variance is a favorable scenario.

Overfitting occurs when the bias is too low while the variation is excessive. Finding a balance between these two circumstances is therefore known as the bias-variance trade-off.

17: Is it always required to split the train test into an 80:20 ratio?

No, it is not a requirement that the data be divided in an 80:20 ratio. The main goal of the splitting is to obtain some data that the model has never seen before so that we can assess the model’s performance.

Only 1000 or perhaps 2000 rows of data, from a dataset with, let’s say, 50,000 rows of data, are required to assess the model’s performance.

18: What is Principal Component Analysis?

With PCA (Principal Component Analysis), an unsupervised machine learning dimensionality reduction method, we trade off part of the data’s content or patterns for a significant size reduction.

With this approach, we want to retain 95% or more of the variance from the original dataset. We can drastically reduce the data size for very high dimensional data, sometimes even at the cost of 1% of the variation.

This approach enables quick data visualization, image compression, and high-dimensional data visualization.

19: What is one-shot learning?

Machine learning has a notion called “one-shot learning” where the model is taught to identify patterns in datasets from a single example rather than on numerous datasets.

When we don’t have many large datasets, this is helpful. It is used to identify patterns of resemblance and differences between the two photos.

Machine Learning Archives » Data Science Tutorials

20: What distinguishes Euclidean distance from Manhattan distance?

Two methods of measuring distance are the Manhattan Distance and the Euclidean Distance.

The total absolute difference between the coordinates of two points along each dimension is used to determine the Manhattan Distance (MD).

The square root of the sum of the squared differences between the coordinates of two places along each dimension is used to determine the Euclidean Distance (ED).

The efficacy of the clusters created by a clustering algorithm is typically assessed using these two measures.

Continue…