Boosting in Machine Learning, A single predictive model, such as linear regression, logistic regression, ridge regression, etc., is the foundation of the majority of supervised machine learning methods.

However, techniques such as bagging and random forests provide a wide range of models from repeated bootstrapped samples of the original dataset. The average of the predictions provided by the various models is used to make predictions on new data.

These techniques employ the following procedure, which tends to provide a forecast accuracy improvement above techniques that just use a single predictive model.

The first step is to create individual models with high variance and low bias (e.g. deeply grown decision trees).

Then, in order to lessen the variance, take the average of each model’s forecasts.

Boosting is a different technique that frequently results in even greater increases in predicting accuracy.

What is “boosting”?

Boosting is a technique that can be used in any model, but it is most frequently applied to decision trees.

Boosting’s basic premise is as follows:

- Create a weak model first.

A model is considered “weak” if its error rate is barely superior to chance. This decision tree usually only has one or two splits in real life.

- Create a new weak model based on the prior model’s residuals.

In actuality, we fit a new model that marginally reduces the overall error rate using the residuals from the prior model (i.e., the errors in our predictions).

- Keep going until k-fold cross-validation instructs us to stop.

In actuality, we determine when to stop expanding the boosted model using k-fold cross-validation.

By repeatedly creating new trees that enhance the performance of the prior tree, we can start with a weak model and keep “boosting” its performance until we arrive at a final model with high prediction accuracy.

Boosting: Why Does It Work?

It turns out that boosting can create some of the most potent machine learning models.

Because they consistently outperform all other models, boosted models are employed as the standard production models in numerous sectors.

Understanding a straightforward concept is key to understanding why boosted models perform so well.

- To start, boosted models construct a weak decision tree with poor prognostication. It is claimed that this decision tree has a strong bias and low variance.

- The total model is able to gradually lower the bias at each step without significantly raising the variance as boosted models iteratively improve earlier decision trees.

- The final fitted model typically has a low enough bias and variance, which results in a model that can generate fresh data with low test error rates.

Effects of Boosting

The obvious advantage of boosting is that, in contrast to practically all other forms of models, it may create models with great predictive accuracy.

The fact that a fitted boosted model is highly challenging to interpret is one potential downside. Although it may have a great deal of ability to forecast the response values of incoming data, the precise method it employs to do so is difficult to describe.

In reality, the majority of data scientists and machine learning experts construct boosted models in order to be able to precisely forecast the response values of fresh data. Consequently, it is usually not a problem that boosted models are difficult to interpret.

- XGBoost

- AdaBoost

- CatBoost

- LightGBM

One of these approaches might be better than the other depending on the size of your dataset and the processing capability of your system.

Further Resources:-

Because the greatest way to learn any programming language, even R, is by doing.

Change ggplot2 Theme Color in R- Data Science Tutorials

Artificial Intelligence Examples-Quick View – Data Science Tutorials

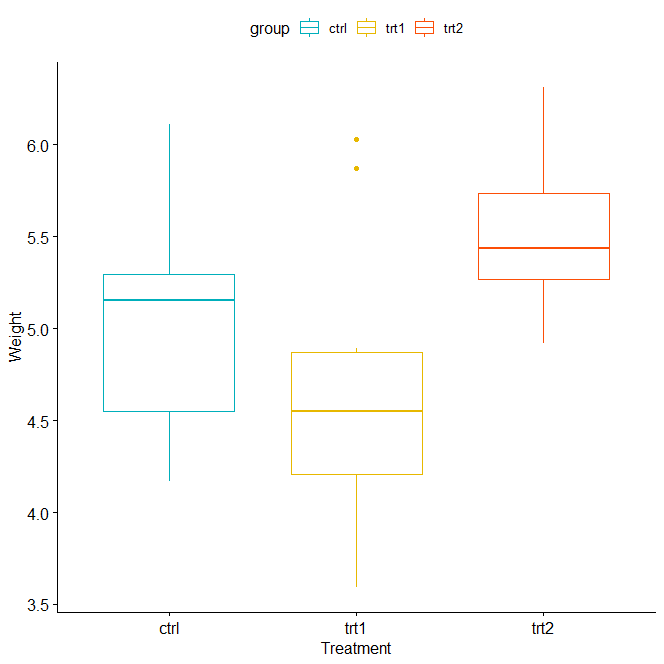

How to perform the Kruskal-Wallis test in R? – Data Science Tutorials