Learn Hadoop for Data Science, Are you wondering why learning Hadoop is necessary for data science? You are on the appropriate page.

You can read more about why Hadoop is essential for data scientists here. This article’s conclusion will include a case study showing how Marks & Spencer Company uses Hadoop to meet its data science needs.

So, without further ado, let’s get to the point:

Data is growing at an exponential rate right now. Processing a large amount of data is in high demand.

Hadoop is one such system in charge of handling massive amounts of data.

Top Data Science Applications You Should Know 2023 (datasciencetut.com)

What Does Hadoop Actually Mean?

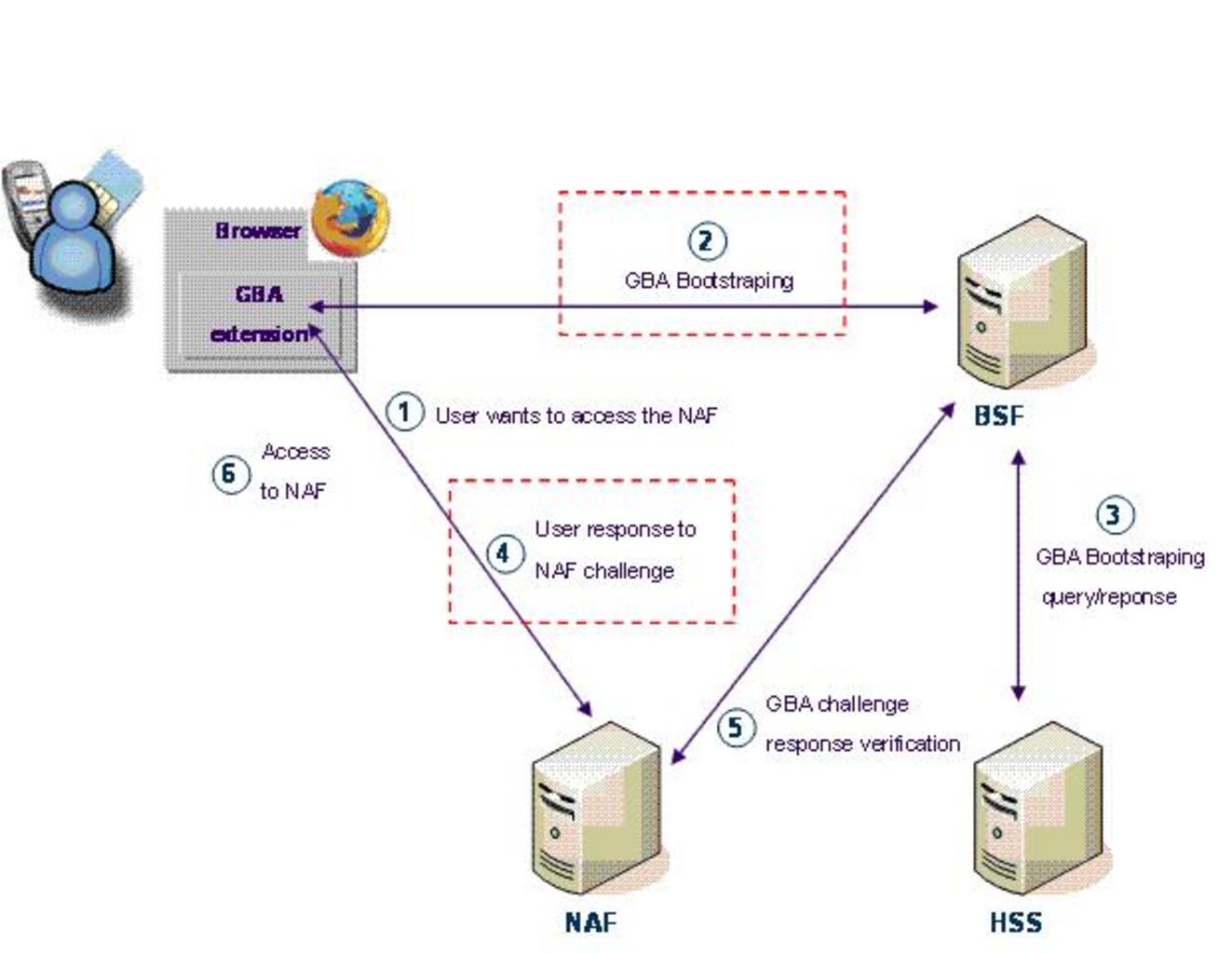

Apache Hadoop is free software that enables a network of computers to work together to solve problems that call for large amounts of data and processing power.

Due to its tremendous scalability, Hadoop can support computing on anything from a single server to a cluster of thousands of computers.

Despite being designed in Java, Hadoop supports a variety of programming languages, including Python, C++, Perl, Ruby, and more.

After Google published its research paper describing the Google File System and other Big Data technologies, such as MapReduce, the term became widely accepted.

Three main components of Hadoop

Hadoop Distributed Filesystem: This is Hadoop’s storage system. A group of master-slave networks makes up Hadoop.

On the master and slave nodes, respectively, the HDFS daemons namenode and data node execute.

Map-Reduce: This component of Hadoop is in charge of advanced data processing. It makes it easier to process a lot of data across a group of nodes.

YARN: It is utilized for job scheduling and resource management. It is challenging to manage, distribute, and release the resources in a multi-node cluster.

Hadoop Yarn makes it possible to efficiently manage and govern these resources.

Applications of Data Science in Education – Data Science Tutorials

Hadoop: A Necessity for Data Scientists?

The answer is a resounding YES! For data scientists, Hadoop is a need.

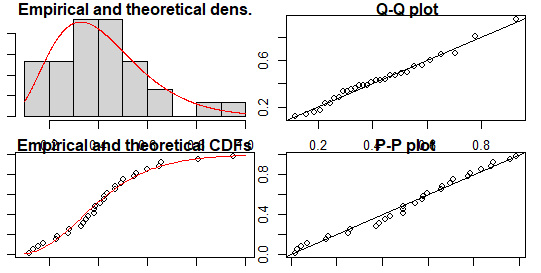

Data science is a broad discipline. It comes from a variety of interdisciplinary disciplines, including programming, statistics, and mathematics. Finding patterns in data is the goal.

Data scientists receive training in data extraction, analysis, and prediction. It is a catch-all phrase that refers to practically all data-related technologies.

Big Data storage is Hadoop’s primary role. Additionally, it enables users to store both organized and unstructured data in all forms.

For the study of massive amounts of data, Hadoop also offers modules like Pig and Hive.

Data science and big data

Data science is a discipline that encompasses all data operations, which is how it differs from big data. Big Data is therefore a component of data science.

Big Data knowledge is not required because Data Science contains a vast amount of information.

However, understanding Hadoop will undoubtedly broaden your experience and enable you to handle massive amounts of data with flexibility.

Additionally, this will significantly raise your market value and provide you with an advantage over rivals.

Additionally, an understanding of machine learning is essential for data scientists. Larger datasets significantly improve the performance of machine learning systems.

As such, big data becomes an ideal choice for training machine learning algorithms. Therefore, in order to understand the intricacies of Data Science, knowledge of big data is a must.

As the aforementioned figure plainly illustrates, Hadoop is essential and the very first step in the process of becoming a data scientist.

Lottery Prediction-Comparison between Statistics and Luck (datasciencetut.com)

For data operations involving huge-scale data, Hadoop is one of the well-known big data platforms that is most frequently employed.

You need to be familiar with handling vast volumes of unstructured data in order to take the first step toward becoming a fully-fledged data scientist.

Hadoop shows to be the perfect platform for this use, enabling users to resolve issues involving enormous amounts of data.

Additionally, Hadoop is a perfect data platform that gives you the capacity to handle massive amounts of data as well as analyze it using a variety of extensions like Mahout and Hive.

Therefore, mastering Hadoop as a whole will provide you the capacity to manage a variety of data processes, which is a data scientist’s primary responsibility.

Learning Hadoop as a starting tool will provide you with all the knowledge you need because it makes up a significant percentage of data science.

Starting your Big Data Hadoop Training now will help you seize the opportunity before someone else does.

Writing Java machine learning code over map-reduce in the Hadoop ecosystem becomes a very challenging task.

It becomes challenging to include machine learning operations like classification, regression, and clustering into a MapReduce architecture.

Pig and Hive are the two primary Hadoop components that Apache published to make data analysis easier.

Furthermore, the Apache Software Foundation developed Apache Mahout for doing machine learning operations on the data.

Hadoop, which uses the MapReduce paradigm as its primary paradigm, is built upon by Apache Mahout.

A data scientist must be knowledgeable about every operation involving data. As a result, having knowledge of Big Data and Hadoop will enable you to create an elaborate architecture that analyses enormous amounts of data.

Rejection Region in Hypothesis Testing – Data Science Tutorials

Why use Hadoop?

Hadoop: A Scalable Big Data Solution

The Hadoop Ecosystem has received praise for its scalability and dependability. It gets harder for database systems to handle expanding information as a result of the enormous increase in information.

Massive amounts of data can be stored in Hadoop’s fault-tolerant and scalable architecture without any data loss. Hadoop encourages two different sorts of scalability:

Vertical scalability is the capacity to increase the resources (like CPUs) available to a single node. By doing this, we expand the physical power of our Hadoop system.

In order to increase its strength and power, we may also add extra RAM and CPU to it.

Adding extra nodes or systems to the distributed software system is known as horizontal scaling.

We can increase the number of computers without having to stop the system, in contrast to vertical scalability’s approach to capacity expansion.

By doing this, the issue of downtime is resolved, and scaling out is most effective. Additionally, this renders numerous parallel-working devices.

Free Best Online Course For Statistics – Data Science Tutorials

Structure of Hadoop

The following are some of the key elements of Hadoop:

- Hadoop Distributed File System (HDFS)

- MapReduce

- YARN

- Hive

- Pig

- HBase

Hadoop has been utilized more and more in recent years to develop data science techniques in various industries.

Industries have been able to completely utilize data science thanks to the integration of big data and data science. The four main ways that Hadoop has affected data scientists are as follows:

1. Data exploration using massive datasets

Data scientists must manage vast amounts of data. Data scientists were previously limited to storing their datasets on local machines.

Hadoop, however, offers a setting for exploratory data analysis in response to the growth in data and the enormous demand for big data research.

In order to get results using Hadoop, you can write a MapReduce job, a HIVE script, or a PIG script and execute it directly on Hadoop over the entire dataset.

2. Preparation of a lot of data

The majority of data preprocessing is performed via data capture, transformation, cleansing, and feature extraction in data science roles.

This process is necessary to convert unstandardized feature vectors from raw data.

Large-scale data preparation is made simple for data scientists by Hadoop. It offers instruments for effectively managing massive amounts of data, including MapReduce, PIG, and Hive.

Get the first value in each group in R? – Data Science Tutorials

3. Making Data Agility Required

Hadoop offers its customers a flexible schema in contrast to conventional database systems, which demanded a rigid schema structure.

Every time a new field is required, this adaptable schema, also known as “schema on read,” eliminates the requirement for schema revision.

4. Making large-scale data mining easier

Larger datasets have been shown to improve machine learning algorithm training and output.

There is a large variety of statistical techniques available thanks to methods like clustering, outlier identification, and product recommenders.

In the past, machine learning engineers only had access to a small amount of data, which led to the poor performance of their models.

However, you can save all the data in RAW format with the aid of the Hadoop ecosystem, which offers linearly scalable storage.

How to create a Sankey plot in R? – Data Science Tutorials

Was this post helpful? Please provide feedback in the comments. This will enable us to provide you with more of these engaging articles.

Data Science Applications in Banking – Data Science Tutorials